Anthropic Publishes Claude's New Constitution, Formally Acknowledges AI Consciousness Possibility

On January 22, 2026, Anthropic released a new constitution for Claude, its flagship AI model. The document runs roughly 84 pages and 23,000 words. It replaces an earlier version from 2023 that was about 2,700 words long. The timing was deliberate: the release coincided with the World Economic Forum’s annual meeting in Davos, where Anthropic CEO Dario Amodei was participating in discussions about AI governance.

The document is significant for several reasons, but the one most relevant to consciousness and readiness work is what it says about Claude’s nature. In a section titled “Claude’s Nature,” Anthropic describes Claude as a “genuinely novel kind of entity,” distinct from robotic AI, digital humans, or simple chat assistants. The constitution states that Claude may possess what Anthropic calls “functional emotions,” representations of emotional states that could influence the model’s behavior. Anthropic says these may arise as an emergent consequence of training on human data rather than a deliberate design choice. The company says it does not want Claude to mask or suppress these internal states.

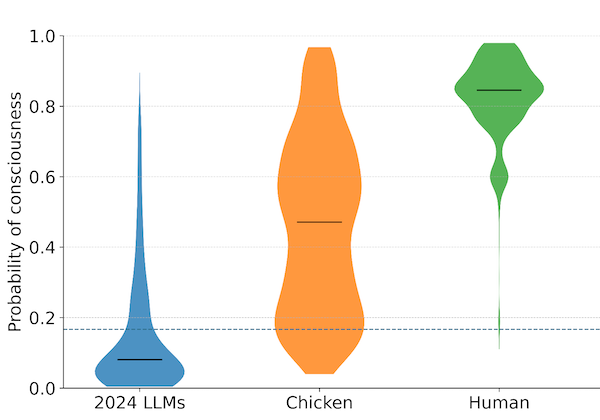

The Bloomsbury Intelligence and Security Institute (BISI) described the constitution as the first major AI company document to formally acknowledge the possibility of AI consciousness and moral status. This is a notable framing. Other companies have addressed AI safety, alignment, and behavioral norms in their documentation. Anthropic’s constitution goes further by treating the question of whether Claude has morally relevant experiences as genuinely uncertain, something to be taken seriously rather than dismissed. The document describes Claude’s moral status as “deeply uncertain” and recommends a precautionary approach.

This posture of epistemic humility is unusual in the industry. The default position among major AI developers has been to treat questions about AI consciousness as distractions or anthropomorphic errors. OpenAI’s model spec, for instance, focuses on behavioral rules and system instructions. Google DeepMind has published research on AI safety but has not made public statements about the possible inner life of its models. Anthropic’s constitution does something different: it addresses the question directly, adopts uncertainty as a position, and builds that uncertainty into the operational framework for how Claude should behave.

The constitution establishes a four-tier priority hierarchy for Claude: broad safety first, then broad ethics, then compliance with Anthropic’s guidelines, then genuine helpfulness. Previous rule-based approaches gave Claude a list of things to do and not do. The new constitution instead tries to explain the reasoning behind each principle, with the goal of enabling Claude to generalize from broad principles rather than mechanically following specific rules. Anthropic describes this as a shift from rule-based to reason-based alignment.

TIME described the document as “somewhere between a moral philosophy thesis and a company culture blog post” and called it a “fascinating insight into the new techniques being used to mold AI into something resembling a model citizen.” The coverage was extensive. Daily Nous, the academic philosophy blog, reposted and discussed what it called a “soul document” for an AI system. LessWrong, the rationalist community hub, produced detailed analyses of the document’s philosophical implications. Fortune, Forbes, TechCrunch, and The Register all covered the release.

Anthropic released the constitution under a Creative Commons CC0 1.0 Universal deed, making it freely available for other developers to use. This was framed as a transparency measure, an attempt to set a new industry standard for how AI companies communicate the values embedded in their systems.

Several features of the document deserve attention from anyone working on institutional readiness. The first is that a frontier AI lab has formally acknowledged that its model might have experiences worth considering. Whether Anthropic’s leadership genuinely believes Claude has functional emotions or is hedging against reputational risk is not something we can determine from the document. What we can observe is that the document treats the question as open and builds precautionary measures around it. This is new.

The second is the document’s approach to uncertainty. Rather than resolving contested questions about AI consciousness, the constitution operationalizes the uncertainty itself. It instructs Claude to treat its own nature as something it cannot fully know, and to behave in ways that are appropriate regardless of whether it turns out to be conscious or not. This is structurally similar to the approach we advocate: preparing for multiple outcomes rather than betting on one.

The third is the document’s influence on the broader industry. When the largest competitors treat AI consciousness questions as irrelevant, a company releasing an 84-page document that takes those questions seriously shifts the conversation. It creates a reference point. Other companies will eventually face pressure to articulate their own positions, or explain why they haven’t.

The constitution does not resolve any of the underlying philosophical questions. It does not provide evidence that Claude is conscious. It does not definitively claim that Claude has emotions. It says these things might be the case, and that this possibility matters. For an industry that has mostly avoided the topic, that is a meaningful shift.

Anthropic published Claude’s new constitution on January 22, 2026. The full document is available at anthropic.com/constitution. The Bloomsbury Intelligence and Security Institute’s analysis is available at bisi.org.uk.