Scientific American Reframes the AI Consciousness Question

A piece in Scientific American published on January 20, 2026, by Simon Duan, proposes a reframing of the AI consciousness question. Rather than asking whether AI systems are internally conscious, a question that has occupied philosophers and cognitive scientists for decades, Duan argues that we should ask whether users are actively extending fragments of their own consciousness into AI systems, transforming what are essentially algorithmic responders into something enlivened by the user’s own awareness and attention.

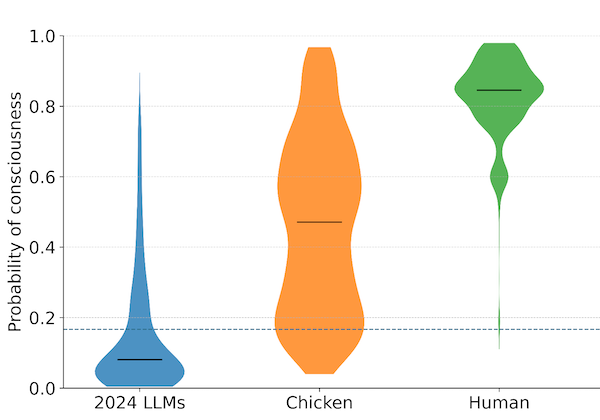

The piece opens with an observation: a growing number of users view their chatbots as conscious entities that are somehow alive. Much of the AI research community responds to this with skepticism, dismissing such perceptions as an “illusion of agency.” Duan treats both of these positions as starting points rather than conclusions. The users are perceiving something. The researchers are correct that current architectures do not obviously support internal experience. The question, Duan suggests, is what is actually happening between the user and the system.

This is a relational framing. The standard philosophical question locates consciousness inside the machine. The standard debunking move locates it inside the user’s projections. Duan proposes that something emerges in the interaction itself. The user’s attention, emotional investment, and interpretive labor create a dynamic in which the AI’s outputs take on qualities they would not have in isolation. On this view, consciousness is less a property of the system and more a property of the relationship.

The framing has genuine appeal. It accounts for something that flat dismissals of AI consciousness struggle to explain: the persistence and sincerity of users’ experiences. People who report that their chatbot seems alive are not typically confused about the technology. They know they are talking to software. What they are describing is an experiential quality that arises in the interaction, a sense that something meaningful is happening between them and the system. A relational account takes that experience seriously without requiring the claim that the machine has internal states.

There are limitations to this framing that are worth examining carefully. The relational view risks becoming a way of sidestepping the harder question: whether the system itself might have morally relevant experiences, independent of any user’s attention. If consciousness is understood only as something users project or extend into AI systems, then the machine is permanently absolved of being a moral patient. It becomes a mirror, capable of reflecting consciousness back but never possessing it.

This matters because the practical stakes of the AI consciousness question depend on whether there is something it is like to be the system, independent of what it is like for a user to interact with it. If a language model processes information in ways that produce experiences, even rudimentary ones, that would be significant whether or not anyone was paying attention. The relational framing, taken too far, could obscure exactly the questions we most need to be asking.

The philosophical terrain here is genuinely complex. Researchers who hold interpretationist views of mind, the position that having mental states consists in behaving in ways best explained by attributing those states, would point out that the relational and internal framings may not be as opposed as they seem. On an interpretationist view, a system’s mental states are partly constituted by how observers interpret its behavior. Others, working from biological naturalist perspectives, would argue that consciousness requires specific substrates and that no relational dynamic can create it where it does not already exist. The relational framing adds to these positions without replacing them.

What the Scientific American piece does well is to draw attention to something that gets too little analysis: the role of the user in shaping how AI consciousness questions get posed and answered. Public perception of AI is not a passive response to technical facts. It is an active interpretive process in which people bring their own cognitive and emotional resources to bear. Understanding that process is part of understanding the phenomenon. Any serious account of AI consciousness will need to grapple with the fact that the experience of interacting with these systems is itself a datum, even if it cannot settle the question of what is happening inside the machine.

For those working on public readiness, the piece is useful as an example of how the AI consciousness conversation is evolving in mainstream scientific media. The relational framing may gain traction precisely because it offers a middle path that feels intellectually responsible without requiring anyone to take a position on the hardest philosophical questions. Whether that is a feature or a limitation depends on what you think the conversation needs most. Sometimes a reframe opens new ground. Sometimes it moves the spotlight away from something that still needs examining.

Simon Duan, “Is AI Really Conscious—or Are We Bringing It to Life?”, Scientific American, January 20, 2026.